The AI economy is running on broken infrastructure.

Four metrics explain every missed AI deployment, stranded GPU, and 24-month grid queue. The stack we inherited from cloud doesn't work at AI physics.

Factory-built AI compute pods.

PODOS AI designs modular compute pods that arrive as engineered infrastructure units — built for power, cooling, compute density, deployment speed, and operational flexibility.

Modular pod architecture

Engineered infrastructure units, not custom one-offs. A repeatable design with predictable interfaces means faster site work, simpler operations, and a lower-variance commissioning timeline.

Factory-built construction

Pods are produced in a controlled environment with consistent quality, then shipped as completed assemblies. Off-site construction runs in parallel with site preparation.

High-density compute

Dense GPU and accelerator configurations engineered for AI workloads, with the cooling and power to match.

Deployable at facilities

Designed to be placed where compute is actually needed — campuses, sites, edge locations.

Fast commissioning

Standardized hookups for power, network, and cooling cut weeks off on-site bring-up.

Secure, controlled enclosure

Hardened pod chassis with controlled access, environmental sealing, and integrated monitoring.

Deploy one megawatt in 90–120 days.

Not four years.

PODOS is a factory-built modular AI supercomputer. Power, cooling, networking, fire, seismic — integrated and pressure-tested before it leaves California. Sites prepare the pad. The pod brings the data center.

POD-0042 · REV·E · CONFIDENTIAL — SEED · 2026

8× DENSITY. LIQUID COOLED. BUILT TO SCALE.

High-density GPU infrastructure in a modular, liquid-cooled pod. Click any callout to explore the spec.

- 8× HIGH-DENSITYGPU MODULES

- CLOSED-LOOPLIQUID COOLING

- HIGH CAPACITYPOWER DELIVERY

- HIGH-SPEEDNETWORK FABRIC

- RUGGED. SECURE.BUILT TO DEPLOY.

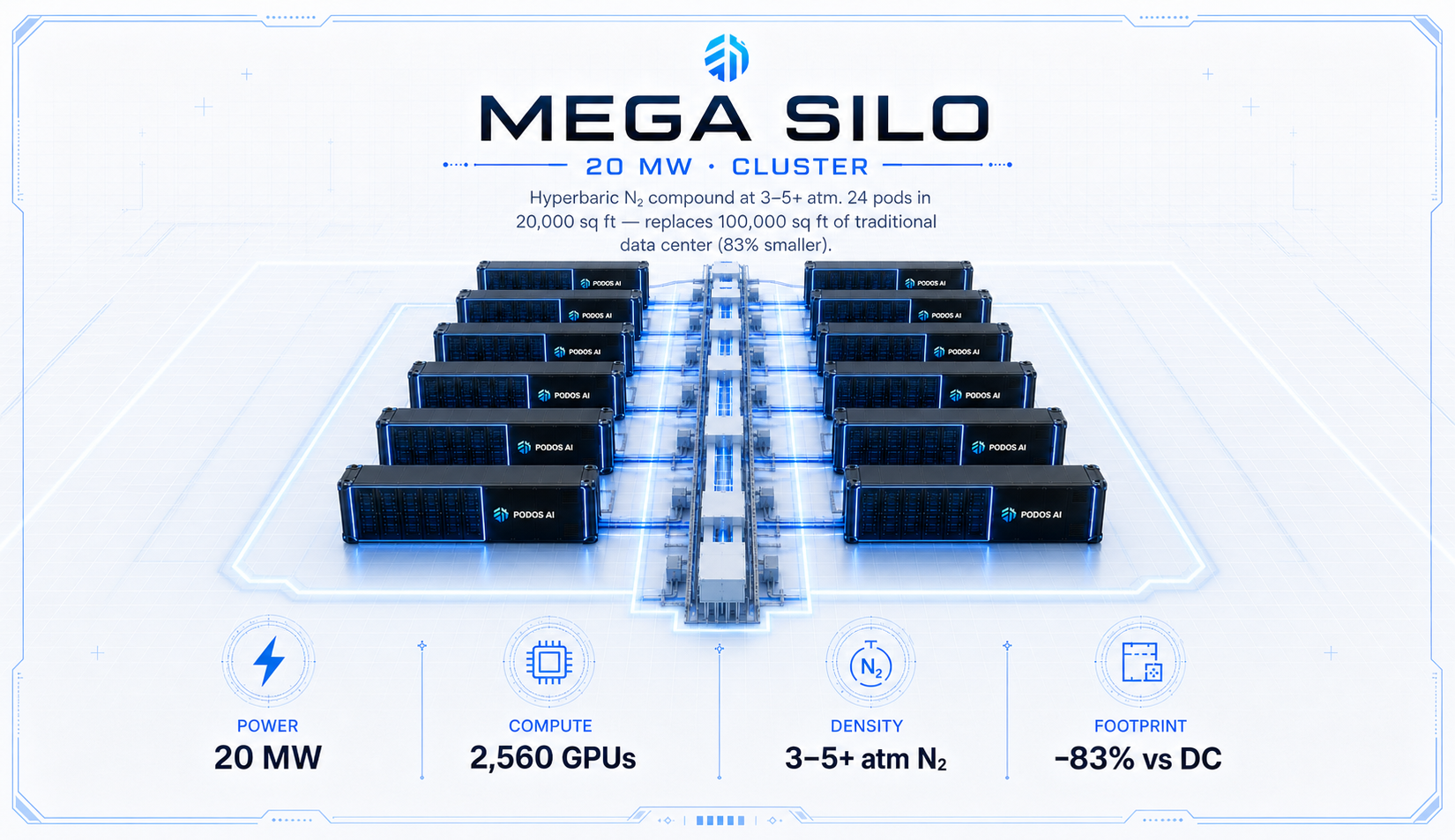

Same DNA, two scales.

Unit economics prove at 1 MW · cluster economics unlock at 20 MW.

Infrastructure designed for faster, simpler deployment.

Every PODOS module is engineered to reduce deployment friction, increase resilience, and improve infrastructure economics.

Thermos enclosure

Multi-layer foam core + reflective vapor barrier on all six surfaces. Thermal delta held steady regardless of climate — Arctic field to Phoenix tarmac, same PUE.

ORC heat engine

Organic Rankine Cycle on the waste-heat loop recaptures 60–110 kW as grid-synchronous electricity. Adds revenue per pod without any additional footprint.

Off-grid ready

Solar roof + battery bank + backup generator integrated. Deploy to remote edge sites without fiber or utility interconnect — same 90-day timeline, anywhere on the map.

Zero water · zero concrete

Closed-loop direct-to-chip liquid cooling means no cooling towers, no water-rights negotiation, no slab permits. The pod lands on gravel or asphalt and starts serving inference.

From factory to facility.

Built for organizations that need controlled AI infrastructure.

From research and clinical environments to manufacturing floors and edge sites, PODOS pods deploy AI compute where it's actually used.

Enterprise AI teams

Internal compute for training, inference, and AI product development — close to the data.

Healthcare facilities

On-prem AI infrastructure for clinical, imaging, and research workloads inside the compliance boundary.

Universities & research labs

Dedicated compute for research groups without competing for shared cluster time.

Manufacturing sites

On-floor compute for industrial AI, vision systems, and process intelligence.

Financial institutions

Inference and modeling capacity inside controlled, audit-friendly infrastructure.

Government & secure environments

Pods for restricted facilities and operations that require physical and network control.

Edge AI deployments

Compute placed close to where data is generated — closer to operations, lower latency.

Supplemental capacity

Add headroom to existing infrastructure without waiting years for new construction.

Built through modular manufacturing discipline.

PODOS brings modular construction principles to AI infrastructure. Pods are engineered in a controlled production environment and delivered for site deployment.

- Factory-built approach.Pods are produced as engineered infrastructure products, not site-fabricated assemblies.

- Consistent production process.A repeatable build sequence reduces variability and improves predictability.

- Faster deployment path.Off-site construction runs in parallel with site preparation, compressing the overall timeline.

- Controlled quality.Production-environment QA replaces field rework as the primary quality lever.

- Repeatable pod architecture.A common architecture across deployments simplifies operations, spares, and upgrades.

Engineered for deployment, density, and control.

PODOS pods are built around six engineering pillars that together define what a deployable AI compute unit is supposed to be.

Compute density

Engineered to host high-performance accelerator configurations within a single deployable unit.

Cooling architecture

Thermal design matched to AI compute load, sustained under continuous operation.

Power readiness

Power distribution sized for high-density compute and configured for site interconnect.

Physical security

Hardened enclosure with controlled access and environmental protection.

Operational flexibility

Configurable for different compute profiles, deployment contexts, and operational models.

Deployment speed

Designed end-to-end for fast site commissioning and compressed time-to-capacity.

Meet the operators behind modular compute

A small team with deep operational experience across data center construction, industrial manufacturing, and AI infrastructure.

Josef Elimelech

Founder & InventorCreator of all 76+ patent claims across both platforms — inventor of record on every USPTO filing. Technical architect of PODOS Pod, MEGA SILO, Syntropic, and Optimus.

Greg McNulty

Chief Executive OfficerFormer Microsoft executive. Enterprise-scale operational leadership and institutional investor relationships taking PODOS AI from invention to global market.

Mike Sherman

Chief Technology OfficerBuilt the Syntropic GPU benchmark suite — validated 99.6% quality preservation on Mistral-7B across 3 GPU platforms. Engineering lead for Optimus and Syntropic.

Barbara Liebeck

VP Sales & Business Dev.Enterprise account management across AI infrastructure. Leads the customer pipeline for EcoSynQ, the Israel market, and hyperscaler prospects.

Rafael Smadja

Graphic Designer & WebBrand identity, thesyntropic.com, and PODOS AI web presence. Translates the technical platform into investor-grade visual communications.

Need AI compute capacity without a multi-year infrastructure timeline?

Talk with PODOS AI about modular compute pod deployment options for your facility.

The fastest path to a deployment conversation

Reach out by phone, email, or stop by the factory. We respond to every inquiry within 72 hours.